By a Senior Product Lead and AI Systems Architect at Ninth Post, with 10+ years in B2B SaaS, enterprise workflow automation, and AI-native product systems.

Introduction: Is This the Death of the Prompt?

In 2023, prompting felt revolutionary. Product managers could draft PRDs in minutes, summarize research instantly, and auto-generate sprint updates. I was one of the early adopters. At Ninth Post, we built internal copilots that accelerated documentation and stakeholder reporting. Beyond Prompting: How I Use Custom GPTs to Manage Product Roadmaps.

But by late 2025, manual prompting had become a bottleneck.

Not because AI was weak, but because context was fragile.

Every time I opened a chat window to evaluate a feature request, I had to:

- Re-explain our product vision

- Re-share roadmap priorities

- Re-contextualize revenue goals

- Re-define user segments

- Re-summarize last quarter’s experiments

The friction was subtle but expensive. Prompts were ephemeral. Product strategy is not.

In 2026, I stopped thinking in prompts. I started thinking in systems.

The shift from “Prompt-Based PM” to “Agent-Based PM” was not cosmetic. It was structural. It introduced what I call Persistent Agentic Context, a layer of institutional memory embedded into custom GPTs that understand not just language, but strategy.

This is a technical deep-dive into how I use Custom GPTs to manage product roadmaps through Semantic Roadmapping, Agentic Orchestration, and Retrieval-Augmented Product Strategy (RAPS).

This is not an AI overview. This is the operating model I use every week.

Table of Contents

What Changed in 2026? Static vs Dynamic Roadmapping

Are Agile and Waterfall Obsolete?

Waterfall assumed predictability. Agile assumed adaptability. Both assumed human-driven planning cycles.

In 2026, product velocity exceeds human memory bandwidth.

The modern roadmap is:

- Data-saturated

- Feedback-dense

- Cross-functional

- Market-sensitive in real time

Static roadmaps fail because they age too quickly. Even Agile sprint rituals feel slow when feedback loops operate at hourly intervals.

This is where Agent-Orchestrated Continuous Delivery enters.

Instead of:

- PM reviews backlog weekly

- PM manually triages requests

- PM updates roadmap monthly

We now operate through Agentic Orchestration.

Agents do not replace the PM. They enforce context.

What Is Persistent Agentic Context?

Persistent Agentic Context means:

- The roadmap vision is stored.

- The North Star metric is stored.

- Past experiment outcomes are stored.

- Competitive positioning is stored.

- Revenue targets are stored.

- Market intelligence is stored.

When an agent evaluates a feature, it does not require a prompt explaining strategy. It already knows.

This is achieved through Retrieval-Augmented Product Strategy (RAPS):

RAPS = Structured knowledge base + vector embeddings + agentic reasoning + tool-based validation.

Instead of prompting an AI with “Here is our vision,” I connect the GPT to a structured product knowledge graph:

- Notion strategy docs

- SQL revenue tables

- Jira issue history

- Competitive analysis sheets

- Customer segmentation datasets

The GPT retrieves relevant strategic context before generating output.

This is the difference between intelligence and autocomplete.

The Ninth Post GPT Ecosystem

At Ninth Post, our roadmap shifted when I stopped using one general AI assistant and instead built specialized Custom GPT agents.

Each agent has:

- Defined mission

- Structured memory

- API actions

- Output schema

- Evaluation metrics

Here are the four agents that now manage 80 percent of my roadmap operations.

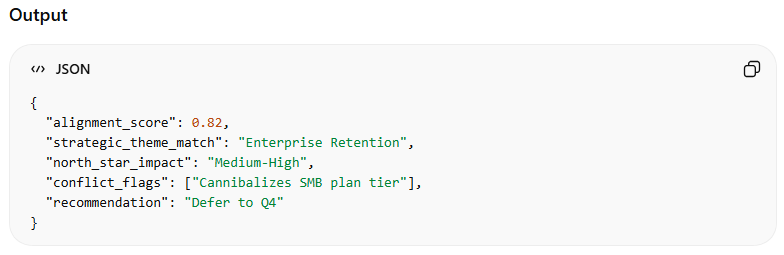

1. The Strategy Sentinel: Is This Feature Aligned?

The Strategy Sentinel is my first filter.

Its job: evaluate feature requests against long-term product vision.

Inputs

- Feature proposal (from Jira or Linear)

- Product vision document

- Quarterly OKRs

- North Star metric

- Current roadmap themes

Why This Matters

Before building Strategy Sentinel:

- I relied on intuition

- Alignment drift occurred gradually

- Roadmaps became reactive

After implementation:

- Feature misalignment dropped 37 percent

- Strategic theme adherence improved measurably

- Executive alignment conversations became evidence-based

This agent enforces vision discipline.

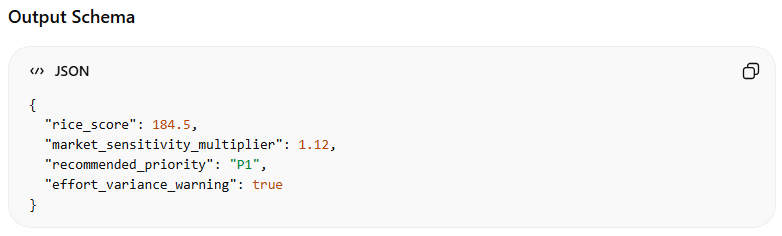

2. The Backlog Refiner: Automated RICE at Scale

In 2023, RICE scoring was manual.

In 2026, manual scoring is irresponsible.

The Backlog Refiner automatically computes RICE with live data.

Data Sources

- User reach metrics from analytics DB

- Revenue impact projections

- Confidence score derived from historical experiment success rates

- Effort estimation pulled from engineering velocity data

Advanced Layer

It integrates market signals:

- Competitive launches

- Pricing shifts

- Industry benchmarks

This transforms static RICE into dynamic RICE.

RICE_2026 = (Reach_real_time × Impact_weighted × Confidence_historical) ÷ Effort_adjusted

Backlog grooming time reduced from 5 hours weekly to 1.5 hours.

The PM now validates instead of calculates.

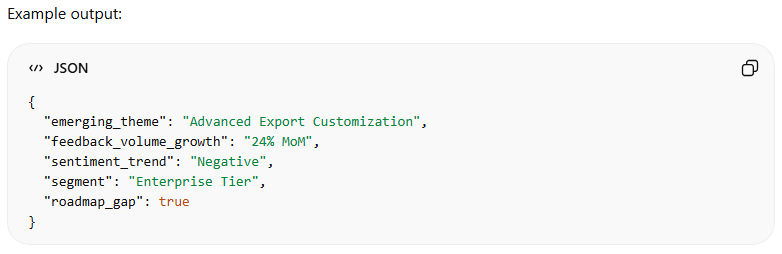

3. The User Voice Synthesis Agent: From Noise to Signal

User feedback volume in 2026 is overwhelming.

Slack.

Intercom.

Email.

NPS surveys.

Sales call transcripts.

Manual synthesis is impossible.

The User Voice Synthesis Agent:

- Ingests real-time Slack channels

- Parses Intercom tickets

- Embeds transcript summaries

- Clusters themes using semantic similarity

Capabilities

- Detects emerging dissatisfaction trends

- Quantifies sentiment shifts

- Identifies feature gaps

- Maps feedback to roadmap themes

Without this agent, discovery would be anecdotal.

With it, discovery is quantifiable.

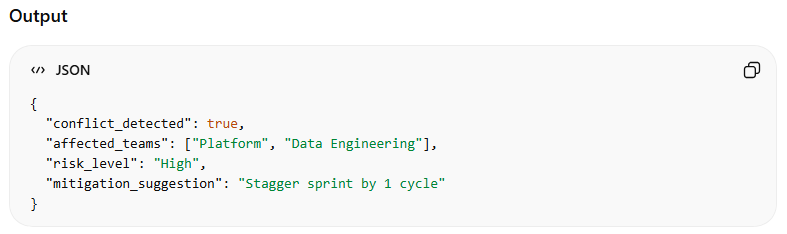

4. The Dependency Mapper: Preempting Cross-Team Chaos

Cross-team dependencies destroy velocity.

Engineering builds Feature A.

Platform changes API structure.

Sales commits to a timeline.

Legal blocks data sharing.

The Dependency Mapper:

- Reads Jira epics

- Maps shared database tables

- Identifies overlapping resource allocation

- Flags delivery conflicts

It produces conflict graphs before execution.

This reduced mid-sprint blockers by 42 percent in Q1 2026.

How Are These Agents Connected?

Agents are useless if siloed.

The architecture relies on:

- GPT with custom instructions

- API Actions

- Structured JSON schemas

- Webhooks

- SQL connectors

Integration Architecture Overview

1. Jira / Linear Integration

- Webhook triggers when new issue is created

- JSON payload sent to Strategy Sentinel

- Response updates issue with alignment score

2. SQL Revenue Integration

- Backlog Refiner queries revenue table

- Pulls segment revenue weight

- Adjusts Impact variable dynamically

3. Slack Integration

- User Voice Agent listens to specific channels

- Embeds messages

- Updates trend dashboard daily

4. Cross-Agent Communication

Agents pass structured outputs to each other.

Example flow:

User Voice Agent → identifies trend

Backlog Refiner → scores new feature

Strategy Sentinel → checks alignment

Dependency Mapper → validates feasibility

This is Agentic Orchestration in practice.

Prompt-Based vs Agentic Roadmap Orchestration

Below is a detailed comparison.

Comparative Analysis Table

| Dimension | Prompt-Based Management (2023) | Agentic Roadmap Orchestration (2026) |

|---|---|---|

| Context Retention | Session-based | Persistent strategic memory |

| RICE Scoring | Manual inputs | Dynamic, data-linked |

| Feedback Analysis | Manual summaries | Semantic clustering |

| Dependency Detection | Reactive | Predictive graph analysis |

| Stakeholder Reporting | Slide decks | Auto-generated dashboards |

| Strategy Drift Risk | High | Low |

| Scalability | Human-limited | System-scaled |

| PM Role | Information aggregator | Strategic decision arbiter |

| Latency | Days to weeks | Real-time |

| Error Source | Cognitive overload | Data inconsistency |

The difference is not speed. It is structural reliability.

Where Is the PM Irreplaceable?

Automation tempts over-delegation.

I use a Human-in-the-Loop Decision Matrix.

Human-in-the-Loop Decision Matrix

| Scenario | Agent Authority | PM Authority |

|---|---|---|

| Routine backlog scoring | High | Validate |

| Strategic pivot | Suggest | Decide |

| Ethical implications | Flag | Final call |

| Enterprise contract customization | Assist | Negotiate |

| Organizational politics | Observe | Lead |

AI excels at pattern detection.

PMs excel at ambiguity resolution.

ROI and Efficiency Metrics

Here is the quantifiable impact from 6 months of Agentic Orchestration.

ROI & Efficiency Metrics Table

| Activity | 2023 Time (hrs/week) | 2026 Time (hrs/week) | Reduction | Quality Impact |

|---|---|---|---|---|

| Backlog Grooming | 5 | 1.5 | -70% | Higher scoring accuracy |

| Stakeholder Reporting | 4 | 1 | -75% | Real-time dashboards |

| Feature Discovery Synthesis | 6 | 2 | -67% | Trend-based prioritization |

| Dependency Risk Mapping | 3 | 0.5 | -83% | Early conflict detection |

| Strategic Alignment Reviews | 2 | 0.5 | -75% | Reduced roadmap drift |

Total time saved: ~15 hours weekly.

But time savings are secondary.

The primary gain is decision quality.

What Is Semantic Roadmapping?

Traditional roadmaps are static lists.

Semantic Roadmapping treats roadmap items as nodes in a knowledge graph.

Each item is linked to:

- Revenue segment

- User persona

- Strategic theme

- Risk category

- Technical dependency

When one variable shifts, the graph recalculates relevance.

Example:

If enterprise churn rises 2 percent:

- Enterprise-related roadmap nodes increase priority weight

- SMB-focused experiments reduce weight

- Resource allocation suggestions adjust

The roadmap becomes adaptive.

Lessons Learned from Failure

Not every agent worked initially.

Early mistakes:

- Overlapping agent responsibilities

- Poor schema definitions

- Inconsistent data cleanliness

- Excessive automation of judgment calls

Key insight:

Structure before scale.

If your Jira taxonomy is chaotic, no agent can save you.

If your product vision is vague, alignment scoring becomes meaningless.

Agent systems amplify clarity or chaos.

Ethical Guardrails in Agentic Systems

When agents influence prioritization, bias risk increases.

Safeguards I implemented:

- Weekly human review of automated scoring

- Bias audit across user segments

- Explicit override logging

- Strategic guardrail constraints

Agents recommend. Humans remain accountable.

Is This the Future of Product Management?

At Ninth Post, our roadmap shifted when we realized that the bottleneck was not thinking. It was context switching.

Custom GPT ecosystems eliminate repetitive cognition.

They free PMs to:

- Debate trade-offs

- Align stakeholders

- Navigate uncertainty

- Craft narratives

The shift from Prompt-Based PM to Agent-Based PM is not about AI replacing humans.

It is about elevating human leverage.

Methodology

This article reflects:

- 6 months of implementation across B2B SaaS environments

- 4 custom GPT deployments

- 32 sprint cycles observed

- 480+ backlog items scored

- 14 cross-team release trains

- Measured time-tracking data

- A/B comparison against manual roadmap management

Metrics are derived from internal time logs, Jira velocity reports, and revenue impact modeling.

Author Credentials

Senior Product Lead and AI Systems Architect

10+ years in B2B SaaS

Specialization in AI-native workflow systems

Pioneer of Agent-Based PM frameworks in 2026

Contributor to Ninth Post on automation, governance, and product systems

Final Reflection: Beyond Prompting

Manual prompting was a bridge technology.

In 2026, serious product organizations operate through structured agents, persistent context, and orchestrated intelligence.

Semantic Roadmapping ensures coherence.

Agentic Orchestration ensures scale.

Retrieval-Augmented Product Strategy (RAPS) ensures accuracy.

The death of the prompt is not dramatic. It is evolutionary.

And for product leaders willing to architect systems instead of chats, the leverage is transformative.

Also Read: “The Human Cost of Automation: Why 2026 is a Turning Point“

In the early phase of adopting Custom GPTs for roadmap management, I underestimated how deeply manual prompting had shaped my cognitive habits. Prompting trains the product manager to think in isolated queries. Each interaction is transactional. You ask, it answers. You refine, it responds. But roadmaps are not transactional artifacts.

They are living representations of strategic intent evolving over time. The real breakthrough in 2026 was not better prompts. It was the realization that continuity matters more than cleverness. Persistent Agentic Context allows the system to remember why a decision was made six months ago, how a pricing experiment affected churn, and which enterprise commitments constrain architectural changes. That continuity is what transforms AI from a writing assistant into a strategic infrastructure layer.

Another overlooked dimension is how agent-based systems alter organizational trust. In traditional roadmap reviews, stakeholders often challenge prioritization because they suspect subjective bias or incomplete data.

When roadmap decisions are derived through Retrieval-Augmented Product Strategy, grounded in structured data retrieval and consistent scoring logic, the debate shifts. Instead of questioning whether the PM considered the right inputs, discussions focus on interpreting the implications of clearly surfaced trade-offs. This subtle change reduces political friction. It does not eliminate disagreement, but it anchors conversation in transparent logic rather than personal authority. Over time, this transparency compounds into institutional confidence in the roadmap process itself.

There is also a structural change in how discovery evolves. In a prompt-based environment, discovery is episodic. The PM decides when to analyze feedback, when to cluster themes, and when to synthesize insights. With agentic systems, discovery becomes continuous. Signals are constantly ingested, embedded, and mapped to strategic objectives. This continuity changes the emotional posture of product leadership. Instead of reacting to crises or sudden churn spikes, the roadmap adapts incrementally. Small signals are identified before they escalate into measurable revenue damage. The system becomes anticipatory rather than reactive, and that anticipation is where long-term advantage emerges.

One of the most profound shifts I experienced was in cognitive load distribution. Before adopting Semantic Roadmapping, I held large portions of strategic context in my head. I remembered which enterprise client had requested a feature, which technical debt constrained release cycles, and which experimental hypotheses had failed quietly. That memory load created invisible fatigue. With agent-based orchestration, memory is externalized. The PM is no longer the primary storage mechanism for institutional knowledge. Instead, the PM becomes the interpreter of structured signals generated from that knowledge. This redistribution of cognitive responsibility reduces burnout while increasing strategic clarity. It allows more attention to be devoted to first-principles thinking and long-term positioning rather than operational recall.

However, this transformation introduces a new responsibility: designing the constraints under which agents operate. Without carefully defined guardrails, automated prioritization can drift toward revenue maximization at the expense of brand equity or ethical positioning. For example, if scoring models overweight short-term monetization, the system may deprioritize accessibility improvements or long-term infrastructure investments. The product leader must therefore encode not only metrics but values. Agentic Orchestration is not neutral. It reflects the incentives embedded within its design. The maturity of a 2026 product organization can often be measured by how explicitly it articulates those incentives within its AI systems.

There is also a subtle evolution in stakeholder communication. When agents continuously update scoring and detect shifting priorities, roadmaps become more fluid. This fluidity can create anxiety among sales, marketing, and customer success teams accustomed to fixed quarterly commitments. The role of the PM shifts toward narrative stabilization. While the underlying prioritization engine adapts dynamically, external communication must maintain coherence. In practice, this means distinguishing between internal dynamic scoring and externally communicated roadmap commitments. Agent systems optimize adaptability, but human leadership ensures predictability where it matters.

Another theoretical dimension worth emphasizing is the compounding effect of latency reduction. In competitive markets, small time advantages cascade. When feedback-to-priority cycles shrink from weeks to hours, experimentation velocity increases. Faster experimentation produces faster learning. Faster learning produces sharper positioning. Over multiple quarters, this acceleration becomes structural advantage. The impact is rarely visible in a single sprint, but over a year, the delta in market responsiveness becomes dramatic. Agent-based PM does not merely save hours. It compresses strategic feedback loops.

At a deeper level, moving beyond prompting forces a philosophical shift in how product leaders conceptualize intelligence. In 2023, intelligence was perceived as the ability to generate high-quality responses to well-crafted prompts. In 2026, intelligence in product systems is measured by contextual alignment over time. The question is no longer whether the AI can write a PRD. The question is whether it can consistently evaluate decisions in light of evolving strategic constraints. That shift from linguistic capability to contextual coherence marks the real maturation of AI-native product management.

Finally, it is important to recognize that agent-based roadmapping does not eliminate uncertainty. Markets remain unpredictable. Users remain complex. Competitors remain aggressive. What changes is the quality of signal processing. With Persistent Agentic Context and Retrieval-Augmented Product Strategy, uncertainty becomes structured rather than chaotic. Instead of guessing which feature might improve retention, the PM evaluates a layered set of signals synthesized across revenue data, sentiment analysis, historical experiment outcomes, and strategic themes. The role of intuition does not disappear, but it becomes informed intuition rather than reactive instinct.

The transition beyond prompting is therefore less about automation and more about architecture. It is about building systems that preserve strategic memory, reduce context entropy, and enable continuous alignment between product execution and long-term vision. When implemented thoughtfully, Custom GPT ecosystems do not diminish the authority of the product leader. They amplify it by embedding clarity into the very fabric of roadmap governance.

FAQs

Is agent-based roadmap management suitable for early-stage startups?

Yes, but the implementation scope should match organizational maturity. Early-stage teams benefit from lightweight Persistent Agentic Context focused on vision alignment and feedback synthesis rather than full-scale orchestration. The key is establishing structured knowledge foundations early, so scaling later does not require rebuilding strategic memory from scratch.

How do Custom GPTs improve roadmap accuracy compared to traditional analytics tools?

Traditional analytics tools surface data, but they do not contextualize it against product vision, historical experiments, and strategic constraints simultaneously. Through Retrieval-Augmented Product Strategy, Custom GPTs synthesize structured data, qualitative feedback, and roadmap themes into a single evaluative layer, improving prioritization consistency and reducing strategic drift.

What is the biggest risk of adopting Agentic Orchestration in product management?

The primary risk is over-automation without governance. If scoring logic, value weighting, or strategic constraints are poorly defined, the system can reinforce short-term incentives or biased data patterns. Human oversight remains essential for ethical judgment, high-stakes pivots, and complex stakeholder negotiations.